My models are typically “unstructured grids”, and “normally” I assume that with a 64-bit architecture it should be possible to work with models that are many millions, tens of millions or even hundreds of cells and/or points. Except for the fact that with a given processor and depending on the operations this will of course be very slow…

But now I found some kind of “empirical limit” at a model size of about 10 million cells, or something like 20 million cells plus points. Not a precise limit so far - also because testing takes a lot of time! With larger models I have crashes, not only with my own customized “Paraview derivative”, but also with “original” Paraview 5.6.

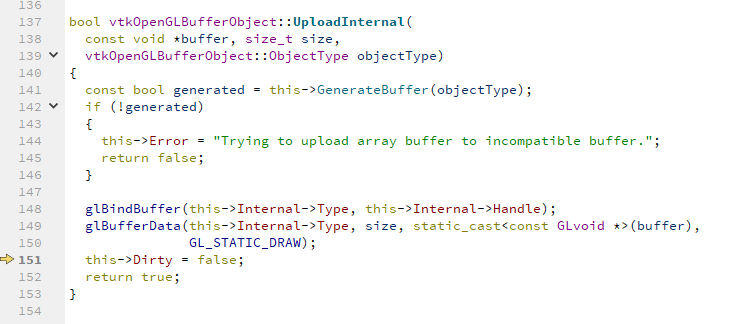

However, today I managed to have one such crash happening in the debugger - because on Sunday my computer had nothing else to do. And the location where it happens is exactly here:

My conclusion is: The crash is due to limitations of the graphic card (in a Windows 10 environment).

Do others agree with this?

For me this is mostly a case of “good to know in case the users have problems”, because I am already telling users most of the time that they tend to go for too many too small cells - in order to do it “really good” - and not considering the much lower precision of their input data.